- Groqは、独自LPUチップにより1秒数百トークン出力の超高速LLM推論基盤を実現

- GroqCloudやChat APIで無料かつ商用利用可能な高速AI環境を提供

- Nvidiaとの提携などで注目度上昇も品質やライセンス範囲の理解が前提

最近のLLMの進化は生成された結果の「精度」だけではなく、処理速度(推論速度)も重要な指標となってきています。

その中で急速に存在感を高めているのがGroqです。GroqはLLM推論を高速化するスタートアップ企業で、GroqCloudやPlayground、APIの利用でLLMを高速に利用できるようになるサービスを展開しています。

その速度はなんとGPT-5.2の約10倍!高コスパ・高速なので様々な活用方法があるようです。

さらに、NvidiaがGroqと非独占的ライセンス契約を結ぶなど生成AI業界の中でも非常に注目度が高くなっています。

この記事ではGroq Chatの使い方や、有効性の検証まで行います。本記事を熟読することで、Groqの凄さを実感し、その他のLLMサービスには戻れなくなるでしょう。

ぜひ、最後までご覧ください。

\生成AIを活用して業務プロセスを自動化/

Groqの概要

Groqとは、1秒で500トークン出力できるAIチャットボットです。これは、GPT-4の「20トークン/秒」と比較しても、25倍の速度を誇ります。

GoogleのTPUを開発した人が立ちあげたスタートアップで、結構前からあるらしい。それが最近になって、話題になったのだとか。

ChatGPTと同様、無料でWebブラウザ上で試すことができ、日本語も対応可能とのこと。

Groqの速さは、LPU(Language Processing Unit)と呼ばれる新しいチップにより実現されており、GPUやCPUを超える計算能力で、生成速度を飛躍的に向上させています。

また、Groqが開発したTensor Streaming Processor(TSP)は、従来のGPUとは異なる新しい処理ユニットで、Linear Processor Unit(LPU)と呼ばれます。このLPUでは、AIにおける計算において効率的なアーキテクチャが採用されているそう。

これにより、計算効率が大幅に向上し、大規模なAIのプロジェクトでもスムーズに動かすことができます。

一方、Groqは生成速度が強みとはいえ、トレードオフとして品質の低下が少ないと言われています。

なお、GPT3.5やLlama2 70Bを上回るLLMについて詳しく知りたい方は、下記の記事を合わせてご確認ください。

Groqの主な特徴と優位性

Groqの最大の特徴は圧倒的な処理速度です。他のAIチャットボットには見られない独自の技術を採用しており、特に以下の3つの点で優れています。

- 高速な処理を支える技術基盤です。GoogleのTPU開発者が技術開発に携わっており、最新のAI技術が活用されています。

- 独自開発のLPU(Language Processing Unit)チップを搭載しています。このチップにより、従来のGPUやCPUよりも高速な処理が可能になりました。

- 商用利用が可能な点も大きな強みです。無料で使えるうえ、企業でも利用できるライセンス形態を採用しています。

これらの特徴により、誰でも無料で高速なAIチャットボットを利用できる環境が整っています。

Groqのライセンス

公式ページGroq Services Agreementによると、Groqが提供するサービスについては下記のように示されています。

| 利用用途 | 可否 |

|---|---|

| 商用利用 |  |

| 改変 |  |

| 配布 |  |

| 特許使用 | 不明 |

| 私的使用 | 不明 |

Groqの使い方

GroqにはGroq Playground、GroqCloudといったサービスがありますが、今回は手軽に使えるGroq Chatを試してみます。

まずは、Groq ChatのWebブラウザ版のページに移動してください。利用は無料ですがログインが必要ですので、Googleアカウントを利用するかメールアドレスを登録してください。

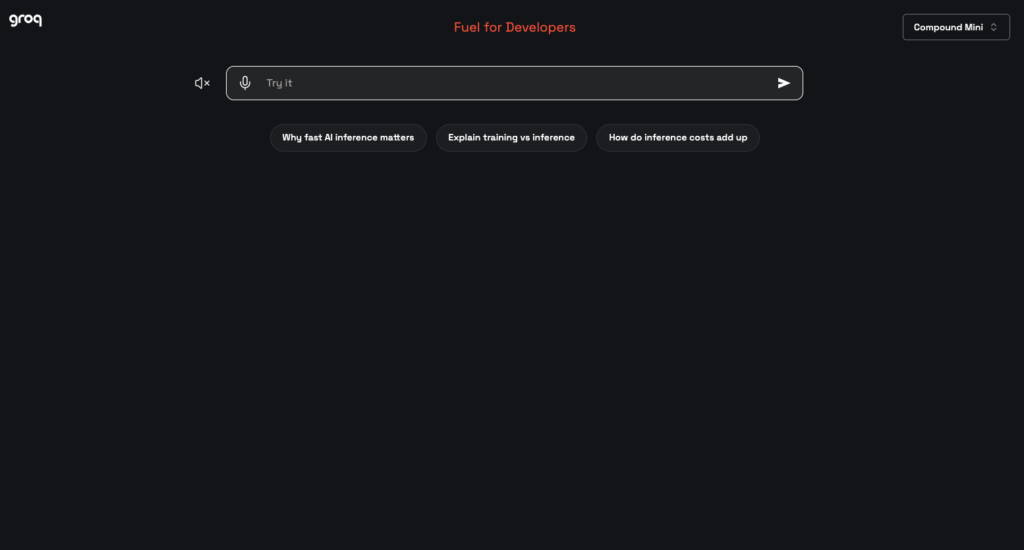

ログイン後は、以下の画面に移動すると思います。

画面上部の「Try it」の部分が、プロンプトを入力する箇所です。

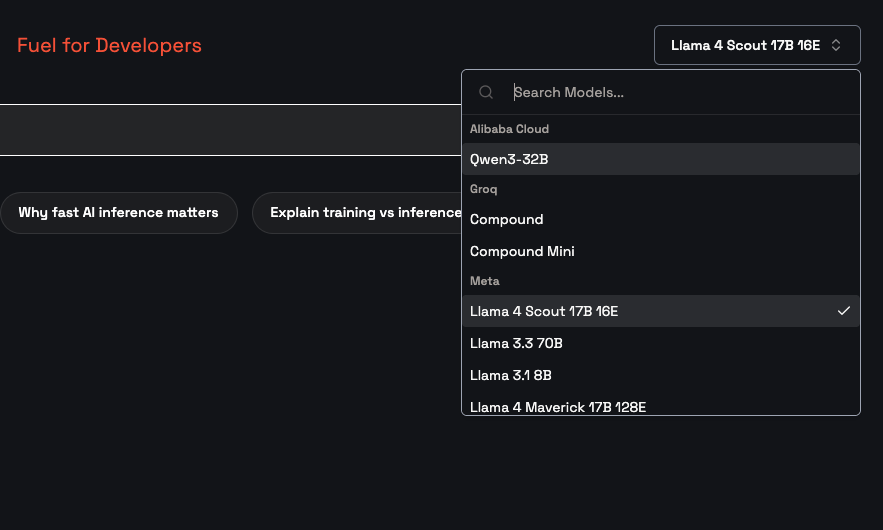

ちなみに、Groqでは、本番運用を想定したプロダクションモデルとして「llama-3.1-8b-instant」「llama-3.3-70b-versatile」「openai/gpt-oss-120b」「whisper-large-v3」といったモデルが利用できます。

また、テスト用途でいつ廃止されるかわからないプレビューモデルには「meta-llama/llama-4-maverick-17b-128e-instruct」「openai/gpt-oss-safeguard-20b」「qwen/qwen3-32b」などのモデルが利用可能です。用途に応じてモデルの変更を行いましょう。

モデルに関しては、右上のプルダウンメニューから選択できます。

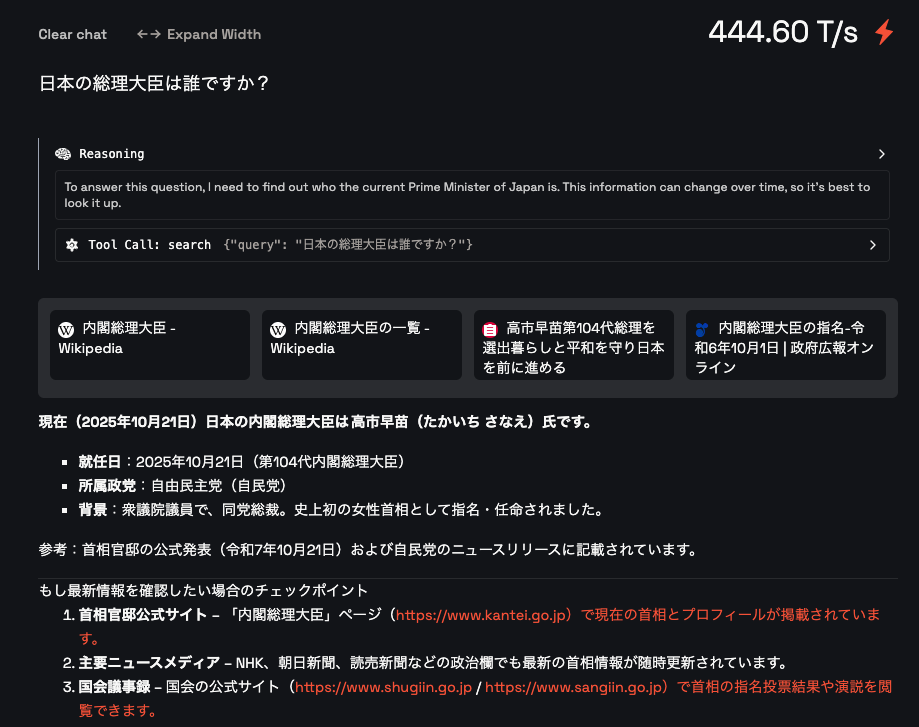

試しに、compound-miniのモデルで日本語で「日本の総理大臣は誰ですか?」とたずねてみます。すると、結果は以下の通りになりました。

インターネットから最新の情報を検索し、現時点での日本の内閣総理大臣を表示してくれました。ちなみに回答の速度が「444.60 T/s」と表示されていますが、これは1秒あたり444.60トークンを生成したということです。逆算すると回答までにかかった時間は約0.00225秒ということになります。早い!

Groqを動かすのに必要なPCのスペック

ChatGPTと同様で、オープンソース化されていないので、必要なスペックは不明です。

■Pythonのバージョン

ローカルで実行していないため不明

■使用ディスク量

ローカルで実行していないため不明

■RAMの使用量

ローカルで実行していないため不明

なお、ChatGPTのオープンソース版であるLlama 2について詳しく知りたい方は、下記の記事を合わせてご確認ください。

GroqとGPTを比較してみた

ここでは、Groq(openai/gpt-oss-120b)とChatGPT(GPT-5.2)を比較します。具体的には、以下のタスクを解かせます。

- 画像認識の深層学習モデルの構築

- 日本語のビジネスメール作成

- 2000文字程度のブログ作成

主に、「回答速度」と「生成結果の精度」を比較していきます。

画像認識の深層学習モデルの構築

まずは、CIFAR-10を用いて画像認識モデルを構築し、「どちらのチャットボットで構築したモデルの方が、精度が高いのか」を検証します。そのために、以下のプロンプトを用いました。

Create a deep learning model of image recognition using the CIFAR-10 dataset, which is readily available in PyTorch. In doing so, please make the model as accurate as possible.

Groq(openai/gpt-oss-120b)の出力結果のコードは、以下の通りです。

クリック(タップ)で表示

import os

import random

import numpy as np

from tqdm import tqdm

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torch.utils.data import DataLoader, random_split

from torch.cuda.amp import GradScaler, autocast

import torchvision

import torchvision.transforms as T

from torchvision.datasets import CIFAR10

from torchvision.models import resnet18, resnet34, resnet50, wide_resnet50_2

# Optional: logging

try:

from torch.utils.tensorboard import SummaryWriter

writer = SummaryWriter()

except Exception:

writer = None

# --------------------------------------------------------------

# 2⃣ Reproducibility

# --------------------------------------------------------------

def set_seed(seed: int = 42):

random.seed(seed)

np.random.seed(seed)

torch.manual_seed(seed)

torch.cuda.manual_seed_all(seed)

torch.backends.cudnn.deterministic = True

torch.backends.cudnn.benchmark = False

set_seed(42)

# --------------------------------------------------------------

# 3⃣ Hyper‑parameters

# --------------------------------------------------------------

BATCH_SIZE = 128 # 128 works on most 12‑GB GPUs

NUM_EPOCHS = 200 # Longer training → higher accuracy

LR = 0.1 # Base LR for SGD (will be scaled by batch)

MOMENTUM = 0.9

WEIGHT_DECAY = 5e-4

WARMUP_EPOCHS = 5

LABEL_SMOOTHING = 0.1

CUTMIX_ALPHA = 1.0 # Set 0 to disable CutMix

MIXUP_ALPHA = 0.2 # Set 0 to disable MixUp

USE_AMP = True # Mixed‑precision training

GRAD_CLIP = 1.0 # None or float

DEVICE = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

# --------------------------------------------------------------

# 4⃣ Data transforms

# --------------------------------------------------------------

# Normalization values for CIFAR‑10

CIFAR10_MEAN = (0.4914, 0.4822, 0.4465)

CIFAR10_STD = (0.2470, 0.2435, 0.2616)

train_transform = T.Compose([

T.RandomResizedCrop(32, scale=(0.8, 1.0), ratio=(0.75, 1.33)),

T.RandomHorizontalFlip(),

T.RandAugment(num_ops=2, magnitude=9), # RandAugment (you can also use AutoAugment)

T.ToTensor(),

T.Normalize(CIFAR10_MEAN, CIFAR10_STD)

])

test_transform = T.Compose([

T.ToTensor(),

T.Normalize(CIFAR10_MEAN, CIFAR10_STD)

])

# --------------------------------------------------------------

# 5⃣ Load datasets

# --------------------------------------------------------------

train_dataset = CIFAR10(root='./data', train=True,

download=True, transform=train_transform)

test_dataset = CIFAR10(root='./data', train=False,

download=True, transform=test_transform)

# Optional: hold‑out a small validation split from the training set

val_ratio = 0.05

val_len = int(len(train_dataset) * val_ratio)

train_len = len(train_dataset) - val_len

train_set, val_set = random_split(train_dataset,

[train_len, val_len],

generator=torch.Generator().manual_seed(42))

train_loader = DataLoader(train_set, batch_size=BATCH_SIZE,

shuffle=True, num_workers=4, pin_memory=True)

val_loader = DataLoader(val_set, batch_size=BATCH_SIZE,

shuffle=False, num_workers=4, pin_memory=True)

test_loader = DataLoader(test_dataset, batch_size=BATCH_SIZE,

shuffle=False, num_workers=4, pin_memory=True)

# --------------------------------------------------------------

# 6⃣ Model definition (ResNet‑18)

# --------------------------------------------------------------

def get_resnet18(num_classes: int = 10, pretrained: bool = True) -> nn.Module:

model = resnet18(pretrained=pretrained)

# CIFAR‑10 images are 32×32 → adjust the first conv layer if using ImageNet weights

# The original ResNet expects 224×224, but the pretrained weights still work.

# Replace the classifier:

model.fc = nn.Linear(model.fc.in_features, num_classes)

# (Optional) Freeze early layers to speed up the first few epochs

# for name, param in model.named_parameters():

# if "layer1" not in name: # keep layer1 trainable

# param.requires_grad = False

return model

model = get_resnet18(num_classes=10, pretrained=True).to(DEVICE)

# Count parameters (useful sanity check)

print(f"Total params: {sum(p.numel() for p in model.parameters()):,}")

# --------------------------------------------------------------

# 7⃣ Loss + MixUp / CutMix utilities

# --------------------------------------------------------------

criterion = nn.CrossEntropyLoss(label_smoothing=LABEL_SMOOTHING).to(DEVICE)

def rand_bbox(size, lam):

"""Generate random box for CutMix."""

W = size[2]

H = size[3]

cut_rat = np.sqrt(1. - lam)

cut_w = int(W * cut_rat)

cut_h = int(H * cut_rat)

# uniform

cx = np.random.randint(W)

cy = np.random.randint(H)

bbx1 = np.clip(cx - cut_w // 2, 0, W)

bby1 = np.clip(cy - cut_h // 2, 0, H)

bbx2 = np.clip(cx + cut_w // 2, 0, W)

bby2 = np.clip(cy + cut_h // 2, 0, H)

return bbx1, bby1, bbx2, bby2

def mixup_data(x, y, alpha=MIXUP_ALPHA):

"""Returns mixed inputs, pairs of targets, and lambda."""

if alpha > 0:

lam = np.random.beta(alpha, alpha)

else:

lam = 1

batch_size = x.size()[0]

index = torch.randperm(batch_size).to(DEVICE)

mixed_x = lam * x + (1 - lam) * x[index, :]

y_a, y_b = y, y[index]

return mixed_x, y_a, y_b, lam

def cutmix_data(x, y, alpha=CUTMIX_ALPHA):

"""Applies CutMix and returns mixed inputs + targets."""

if alpha > 0:

lam = np.random.beta(alpha, alpha)

else:

lam = 1

batch_size = x.size()[0]

index = torch.randperm(batch_size).to(DEVICE)

bbx1, bby1, bbx2, bby2 = rand_bbox(x.size(), lam)

x[:, :, bby1:bby2, bbx1:bbx2] = x[index, :, bby1:bby2, bbx1:bbx2]

# adjust lambda to exactly match pixel ratio

lam = 1 - ((bbx2 - bbx1) * (bby2 - bby1) / (x.size()[-1] * x.size()[-2]))

y_a, y_b = y, y[index]

return x, y_a, y_b, lam

# --------------------------------------------------------------

# 8⃣ Optimizer & LR schedule (SGD + CosineAnnealing with warm‑up)

# --------------------------------------------------------------

optimizer = optim.SGD(model.parameters(),

lr=LR,

momentum=MOMENTUM,

weight_decay=WEIGHT_DECAY,

nesterov=True)

# Cosine annealing with warm‑up implemented manually

def get_cosine_schedule_with_warmup(optimizer, num_warmup, num_training):

def lr_lambda(current_step):

if current_step < num_warmup:

return float(current_step) / float(max(1, num_warmup))

# cosine decay

progress = float(current_step - num_warmup) / float(max(1, num_training - num_warmup))

return 0.5 * (1. + np.cos(np.pi * progress))

return torch.optim.lr_scheduler.LambdaLR(optimizer, lr_lambda)

total_steps = NUM_EPOCHS * len(train_loader)

scheduler = get_cosine_schedule_with_warmup(optimizer,

num_warmup=WARMUP_EPOCHS * len(train_loader),

num_training=total_steps)

scaler = GradScaler(enabled=USE_AMP)

# --------------------------------------------------------------

# 9⃣ Helper: accuracy

# --------------------------------------------------------------

def accuracy(output, target):

"""Computes top‑1 accuracy."""

with torch.no_grad():

pred = output.argmax(dim=1)

correct = pred.eq(target).sum().item()

return correct / target.size(0)

# --------------------------------------------------------------

# Training epoch

# --------------------------------------------------------------

def train_one_epoch(epoch):

model.train()

running_loss = 0.0

running_acc = 0.0

for batch_idx, (inputs, targets) in enumerate(tqdm(train_loader,

desc=f"Epoch {epoch}/{NUM_EPOCHS} [train]")):

inputs, targets = inputs.to(DEVICE), targets.to(DEVICE)

# ----- MixUp / CutMix -------------------------------------------------

r = np.random.rand()

if CUTMIX_ALPHA > 0 and r < 0.5:

inputs, targets_a, targets_b, lam = cutmix_data(inputs, targets, CUTMIX_ALPHA)

use_mix = True

elif MIXUP_ALPHA > 0 and r < 0.75:

inputs, targets_a, targets_b, lam = mixup_data(inputs, targets, MIXUP_ALPHA)

use_mix = True

else:

use_mix = False

optimizer.zero_grad()

with autocast(enabled=USE_AMP):

outputs = model(inputs)

if use_mix:

loss = lam * criterion(outputs, targets_a) + (1 - lam) * criterion(outputs, targets_b)

else:

loss = criterion(outputs, targets)

scaler.scale(loss).backward()

if GRAD_CLIP:

scaler.unscale_(optimizer)

torch.nn.utils.clip_grad_norm_(model.parameters(), GRAD_CLIP)

scaler.step(optimizer)

scaler.update()

scheduler.step()

# ---- metrics ---------------------------------------------------------

running_loss += loss.item() * inputs.size(0)

if use_mix:

# mixed targets → approximate accuracy using the dominant target

acc = lam * accuracy(outputs, targets_a) + (1 - lam) * accuracy(outputs, targets_b)

else:

acc = accuracy(outputs, targets)

running_acc += acc * inputs.size(0)

epoch_loss = running_loss / len(train_loader.dataset)

epoch_acc = running_acc / len(train_loader.dataset)

if writer:

writer.add_scalar('train/loss', epoch_loss, epoch)

writer.add_scalar('train/acc', epoch_acc, epoch)

print(f"Train – loss: {epoch_loss:.4f} | acc: {epoch_acc*100:.2f}%")

return epoch_loss, epoch_acc

# --------------------------------------------------------------

# 1 1 Validation epoch (no augmentation, no mix)

# --------------------------------------------------------------

def validate_one_epoch(epoch, loader, name='val'):

model.eval()

running_loss = 0.0

running_acc = 0.0

# Handle epoch for tqdm display and TensorBoard logging

epoch_display = str(epoch)

if isinstance(epoch, str) and epoch == 'final':

# For final evaluation, use a descriptive string for display

pass # epoch_display is already 'final'

else:

# For training epochs, use the integer value

epoch_display = f"{epoch}/{NUM_EPOCHS}"

for inputs, targets in tqdm(loader, desc=f"Epoch {epoch_display} [{name}]"):

inputs, targets = inputs.to(DEVICE), targets.to(DEVICE)

with autocast(enabled=USE_AMP):

outputs = model(inputs)

loss = criterion(outputs, targets)

running_loss += loss.item() * inputs.size(0)

running_acc += accuracy(outputs, targets) * inputs.size(0)

epoch_loss = running_loss / len(loader.dataset)

epoch_acc = running_acc / len(loader.dataset)

if writer and isinstance(epoch, int):

writer.add_scalar(f'{name}/loss', epoch_loss, epoch)

writer.add_scalar(f'{name}/acc', epoch_acc, epoch)

print(f"{name.title():5s} – loss: {epoch_loss:.4f} | acc: {epoch_acc*100:.2f}%")

return epoch_loss, epoch_acc

# --------------------------------------------------------------

# 2 2 Main training loop

# --------------------------------------------------------------

best_val_acc = 0.0

patience = 15 # stop if no improvement for 15 epochs

no_improve = 0

for epoch in range(1, NUM_EPOCHS + 1):

train_one_epoch(epoch)

val_loss, val_acc = validate_one_epoch(epoch, val_loader, name='val')

# Save best checkpoint

if val_acc > best_val_acc:

best_val_acc = val_acc

no_improve = 0

torch.save({

'epoch': epoch,

'model_state_dict': model.state_dict(),

'optim_state_dict': optimizer.state_dict(),

'scaler_state_dict': scaler.state_dict(),

'val_acc': val_acc,

}, 'best_resnet18_cifar10.pth')

print(">>> Saved new best model")

else:

no_improve += 1

if no_improve >= patience:

print(f"Early stopping triggered after {epoch} epochs.")

break

print(f"\n=== Training finished ===\nBest validation accuracy: {best_val_acc*100:.2f}%")

# --------------------------------------------------------------

# 3 3 Load best checkpoint & evaluate on the official test set

# --------------------------------------------------------------

checkpoint = torch.load('best_resnet18_cifar10.pth', map_location=DEVICE, weights_only=False)

model.load_state_dict(checkpoint['model_state_dict'])

model.eval()

test_loss, test_acc = validate_one_epoch('final', test_loader, name='test')

print(f"Test set accuracy: {test_acc*100:.2f}%")

# --------------------------------------------------------------

# Wide‑ResNet‑28‑10 (no pre‑training) – implementation taken from torchvision

# --------------------------------------------------------------

from torchvision.models.wide_resnet import Wide_ResNet50_2_Weights # only for reference

def get_wrn28_10(num_classes=10):

# The official WRN‑28‑10 definition (depth=28, widen_factor=10)

# can be built manually; below is a compact version.

from torch import nn

class BasicBlock(nn.Module):

expansion = 1

def __init__(self, in_planes, out_planes, stride=1, dropout_rate=0.0):

super().__init__()

self.bn1 = nn.BatchNorm2d(in_planes)

self.relu = nn.ReLU(inplace=True)

self.conv1 = nn.Conv2d(in_planes, out_planes, kernel_size=3,

stride=stride, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_planes)

self.conv2 = nn.Conv2d(out_planes, out_planes, kernel_size=3,

stride=1, padding=1, bias=False)

self.dropout = nn.Dropout(p=dropout_rate) if dropout_rate > 0 else nn.Identity()

self.shortcut = nn.Sequential()

if stride != 1 or in_planes != out_planes:

self.shortcut = nn.Conv2d(in_planes, out_planes,

kernel_size=1, stride=stride, bias=False)

def forward(self, x):

out = self.relu(self.bn1(x))

shortcut = self.shortcut(out)

out = self.conv1(out)

out = self.relu(self.bn2(out))

out = self.dropout(out)

out = self.conv2(out)

out += shortcut

return out

class WideResNet(nn.Module):

def __init__(self, depth=28, widen_factor=10, dropout_rate=0.0, num_classes=10):

super().__init__()

assert (depth - 4) % 6 == 0, "depth should be 6n+4"

n = (depth - 4) // 6

k = widen_factor

self.in_planes = 16

self.conv1 = nn.Conv2d(3, 16, kernel_size=3, stride=1, padding=1, bias=False)

self.layer1 = self._make_layer(16*k, n, stride=1, dropout_rate=dropout_rate)

self.layer2 = self._make_layer(32*k, n, stride=2, dropout_rate=dropout_rate)

self.layer3 = self._make_layer(64*k, n, stride=2, dropout_rate=dropout_rate)

self.bn = nn.BatchNorm2d(64*k)

self.relu = nn.ReLU(inplace=True)

self.fc = nn.Linear(64*k, num_classes)

self._initialize_weights()

def _make_layer(self, out_planes, blocks, stride, dropout_rate):

strides = [stride] + [1]*(blocks-1)

layers = []

for s in strides:

layers.append(BasicBlock(self.in_planes, out_planes, s, dropout_rate))

self.in_planes = out_planes

return nn.Sequential(*layers)

def _initialize_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, mode='fan_out', nonlinearity='relu')

elif isinstance(m, nn.BatchNorm2d):

nn.init.constant_(m.weight, 1)

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.Linear):

nn.init.xavier_uniform_(m.weight)

nn.init.constant_(m.bias, 0)

def forward(self, x):

out = self.conv1(x)

out = self.layer1(out)

out = self.layer2(out)

out = self.layer3(out)

out = self.relu(self.bn(out))

out = F.avg_pool2d(out, 8)

out = out.view(out.size(0), -1)

out = self.fc(out)

return out

return WideResNet(depth=28, widen_factor=10, dropout_rate=0.3, num_classes=num_classes)

model = get_wrn28_10().to(DEVICE)

上記のコードを動かしたところ、以下を含むいくつかのエラーが発生しました。

ValueError: invalid literal for int() with base 10: 'final'ChatGPT(GPT-5.2)の出力結果のコードは、以下の通りです。

クリック(タップ)で表示

import math

import os

import random

import time

from dataclasses import dataclass

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torch.utils.data import DataLoader

import torchvision

import torchvision.transforms as T

# -------------------------

# Repro / helpers

# -------------------------

def set_seed(seed: int = 42):

random.seed(seed)

os.environ["PYTHONHASHSEED"] = str(seed)

torch.manual_seed(seed)

torch.cuda.manual_seed_all(seed)

torch.backends.cudnn.benchmark = True # faster; set False for stricter determinism

@torch.no_grad()

def accuracy(logits, targets):

preds = logits.argmax(dim=1)

return (preds == targets).float().mean().item()

# -------------------------

# WideResNet for CIFAR

# (WRN-28-10 default)

# -------------------------

class BasicBlock(nn.Module):

def __init__(self, in_planes, out_planes, stride, drop_rate):

super().__init__()

self.bn1 = nn.BatchNorm2d(in_planes)

self.relu1 = nn.ReLU(inplace=True)

self.conv1 = nn.Conv2d(in_planes, out_planes, kernel_size=3, stride=stride, padding=1, bias=False)

self.bn2 = nn.BatchNorm2d(out_planes)

self.relu2 = nn.ReLU(inplace=True)

self.conv2 = nn.Conv2d(out_planes, out_planes, kernel_size=3, stride=1, padding=1, bias=False)

self.drop_rate = drop_rate

self.equal_in_out = (in_planes == out_planes)

self.shortcut = None

if not self.equal_in_out:

self.shortcut = nn.Conv2d(in_planes, out_planes, kernel_size=1, stride=stride, padding=0, bias=False)

def forward(self, x):

out = self.relu1(self.bn1(x))

shortcut = x if self.equal_in_out else self.shortcut(out) # pre-activation shortcut

out = self.conv1(out)

out = self.relu2(self.bn2(out))

if self.drop_rate > 0:

out = F.dropout(out, p=self.drop_rate, training=self.training)

out = self.conv2(out)

return out + shortcut

class NetworkBlock(nn.Module):

def __init__(self, num_layers, in_planes, out_planes, block, stride, drop_rate):

super().__init__()

layers = []

for i in range(num_layers):

layers.append(

block(

in_planes=in_planes if i == 0 else out_planes,

out_planes=out_planes,

stride=stride if i == 0 else 1,

drop_rate=drop_rate,

)

)

self.layer = nn.Sequential(*layers)

def forward(self, x):

return self.layer(x)

class WideResNet(nn.Module):

def __init__(self, depth=28, widen_factor=10, drop_rate=0.0, num_classes=10):

super().__init__()

assert (depth - 4) % 6 == 0, "WRN depth should be 6n+4"

n = (depth - 4) // 6

k = widen_factor

n_stages = [16, 16 * k, 32 * k, 64 * k]

self.conv1 = nn.Conv2d(3, n_stages[0], kernel_size=3, stride=1, padding=1, bias=False)

self.block1 = NetworkBlock(n, n_stages[0], n_stages[1], BasicBlock, stride=1, drop_rate=drop_rate)

self.block2 = NetworkBlock(n, n_stages[1], n_stages[2], BasicBlock, stride=2, drop_rate=drop_rate)

self.block3 = NetworkBlock(n, n_stages[2], n_stages[3], BasicBlock, stride=2, drop_rate=drop_rate)

self.bn1 = nn.BatchNorm2d(n_stages[3])

self.relu = nn.ReLU(inplace=True)

self.fc = nn.Linear(n_stages[3], num_classes)

self._init_weights()

def _init_weights(self):

for m in self.modules():

if isinstance(m, nn.Conv2d):

nn.init.kaiming_normal_(m.weight, nonlinearity="relu")

elif isinstance(m, nn.BatchNorm2d):

nn.init.ones_(m.weight)

nn.init.zeros_(m.bias)

elif isinstance(m, nn.Linear):

nn.init.zeros_(m.bias)

def forward(self, x):

out = self.conv1(x)

out = self.block1(out)

out = self.block2(out)

out = self.block3(out)

out = self.relu(self.bn1(out))

out = F.adaptive_avg_pool2d(out, 1).flatten(1)

return self.fc(out)

# -------------------------

# MixUp / CutMix

# -------------------------

def rand_bbox(W, H, lam):

cut_rat = math.sqrt(1.0 - lam)

cut_w = int(W * cut_rat)

cut_h = int(H * cut_rat)

cx = random.randint(0, W - 1)

cy = random.randint(0, H - 1)

x1 = max(cx - cut_w // 2, 0)

y1 = max(cy - cut_h // 2, 0)

x2 = min(cx + cut_w // 2, W)

y2 = min(cy + cut_h // 2, H)

return x1, y1, x2, y2

def mixup_cutmix(images, targets, alpha=1.0, cutmix_prob=0.5):

if alpha <= 0:

return images, targets, targets, 1.0

lam = torch.distributions.Beta(alpha, alpha).sample().item()

batch_size = images.size(0)

index = torch.randperm(batch_size, device=images.device)

if random.random() < cutmix_prob:

# CutMix

W = images.size(3)

H = images.size(2)

x1, y1, x2, y2 = rand_bbox(W, H, lam)

images[:, :, y1:y2, x1:x2] = images[index, :, y1:y2, x1:x2]

# adjust lambda to exactly match pixel ratio

lam = 1.0 - ((x2 - x1) * (y2 - y1) / (W * H))

y_a = targets

y_b = targets[index]

return images, y_a, y_b, lam

else:

# MixUp

mixed = lam * images + (1 - lam) * images[index]

y_a = targets

y_b = targets[index]

return mixed, y_a, y_b, lam

# -------------------------

# EMA

# -------------------------

class EMA:

def __init__(self, model: nn.Module, decay=0.9999):

self.decay = decay

self.shadow = {}

self.backup = {}

for name, p in model.named_parameters():

if p.requires_grad:

self.shadow[name] = p.data.clone()

@torch.no_grad()

def update(self, model: nn.Module):

d = self.decay

for name, p in model.named_parameters():

if p.requires_grad:

self.shadow[name].mul_(d).add_(p.data, alpha=(1 - d))

def apply_shadow(self, model: nn.Module):

self.backup = {}

for name, p in model.named_parameters():

if p.requires_grad:

self.backup[name] = p.data.clone()

p.data.copy_(self.shadow[name])

def restore(self, model: nn.Module):

for name, p in model.named_parameters():

if p.requires_grad:

p.data.copy_(self.backup[name])

self.backup = {}

# -------------------------

# Loss with label smoothing

# -------------------------

class LabelSmoothingCE(nn.Module):

def __init__(self, smoothing=0.1):

super().__init__()

self.smoothing = smoothing

def forward(self, logits, target):

n = logits.size(-1)

log_probs = F.log_softmax(logits, dim=-1)

with torch.no_grad():

true_dist = torch.zeros_like(log_probs)

true_dist.fill_(self.smoothing / (n - 1))

true_dist.scatter_(1, target.unsqueeze(1), 1.0 - self.smoothing)

return torch.mean(torch.sum(-true_dist * log_probs, dim=-1))

# -------------------------

# Train / eval

# -------------------------

@dataclass

class Config:

seed: int = 42

epochs: int = 200

batch_size: int = 128

num_workers: int = 4

lr: float = 0.2 # good for CIFAR with batch 128; auto-scaled below

momentum: float = 0.9

weight_decay: float = 5e-4

nesterov: bool = True

warmup_epochs: int = 5

label_smoothing: float = 0.1

mix_alpha: float = 1.0

cutmix_prob: float = 0.5

ema_decay: float = 0.9999

drop_rate: float = 0.0 # can try 0.1 if overfitting

device: str = "cuda" if torch.cuda.is_available() else "cpu"

def get_dataloaders(cfg: Config):

# CIFAR-10 mean/std

mean = (0.4914, 0.4822, 0.4465)

std = (0.2023, 0.1994, 0.2010)

train_tf = T.Compose([

T.RandomCrop(32, padding=4),

T.RandomHorizontalFlip(),

T.RandAugment(num_ops=2, magnitude=9), # strong-ish; try magnitude 7-10

T.ToTensor(),

T.Normalize(mean, std),

])

test_tf = T.Compose([

T.ToTensor(),

T.Normalize(mean, std),

])

train_set = torchvision.datasets.CIFAR10(root="./data", train=True, download=True, transform=train_tf)

test_set = torchvision.datasets.CIFAR10(root="./data", train=False, download=True, transform=test_tf)

train_loader = DataLoader(

train_set, batch_size=cfg.batch_size, shuffle=True,

num_workers=cfg.num_workers, pin_memory=True, persistent_workers=(cfg.num_workers > 0)

)

test_loader = DataLoader(

test_set, batch_size=256, shuffle=False,

num_workers=cfg.num_workers, pin_memory=True, persistent_workers=(cfg.num_workers > 0)

)

return train_loader, test_loader

def make_optimizer_and_scheduler(model, cfg: Config, steps_per_epoch: int):

# Linear LR scaling rule (relative to batch=128)

lr = cfg.lr * (cfg.batch_size / 128)

optimizer = optim.SGD(

model.parameters(),

lr=lr,

momentum=cfg.momentum,

weight_decay=cfg.weight_decay,

nesterov=cfg.nesterov

)

total_steps = cfg.epochs * steps_per_epoch

warmup_steps = cfg.warmup_epochs * steps_per_epoch

def lr_lambda(step):

if step < warmup_steps:

return (step + 1) / max(1, warmup_steps)

# cosine decay

progress = (step - warmup_steps) / max(1, (total_steps - warmup_steps))

return 0.5 * (1.0 + math.cos(math.pi * progress))

scheduler = optim.lr_scheduler.LambdaLR(optimizer, lr_lambda=lr_lambda)

return optimizer, scheduler

@torch.no_grad()

def evaluate(model, loader, device):

model.eval()

total_acc = 0.0

total = 0

for x, y in loader:

x = x.to(device, non_blocking=True)

y = y.to(device, non_blocking=True)

logits = model(x)

bs = x.size(0)

total_acc += accuracy(logits, y) * bs

total += bs

return total_acc / total

def train(cfg: Config):

set_seed(cfg.seed)

train_loader, test_loader = get_dataloaders(cfg)

model = WideResNet(depth=28, widen_factor=10, drop_rate=cfg.drop_rate, num_classes=10).to(cfg.device)

criterion = LabelSmoothingCE(cfg.label_smoothing)

optimizer, scheduler = make_optimizer_and_scheduler(model, cfg, steps_per_epoch=len(train_loader))

scaler = torch.cuda.amp.GradScaler(enabled=(cfg.device == "cuda"))

ema = EMA(model, decay=cfg.ema_decay)

best_acc = 0.0

global_step = 0

for epoch in range(1, cfg.epochs + 1):

model.train()

t0 = time.time()

running_loss = 0.0

running_acc = 0.0

seen = 0

for x, y in train_loader:

x = x.to(cfg.device, non_blocking=True)

y = y.to(cfg.device, non_blocking=True)

# MixUp/CutMix

x_mix, y_a, y_b, lam = mixup_cutmix(x, y, alpha=cfg.mix_alpha, cutmix_prob=cfg.cutmix_prob)

optimizer.zero_grad(set_to_none=True)

with torch.cuda.amp.autocast(enabled=(cfg.device == "cuda")):

logits = model(x_mix)

loss = lam * criterion(logits, y_a) + (1 - lam) * criterion(logits, y_b)

scaler.scale(loss).backward()

scaler.step(optimizer)

scaler.update()

scheduler.step()

ema.update(model)

bs = x.size(0)

running_loss += loss.item() * bs

# For train acc, compare to original y (rough signal; mix makes it fuzzy anyway)

running_acc += accuracy(logits, y) * bs

seen += bs

global_step += 1

train_loss = running_loss / seen

train_acc = running_acc / seen

# Eval with EMA weights

ema.apply_shadow(model)

test_acc = evaluate(model, test_loader, cfg.device)

ema.restore(model)

dt = time.time() - t0

if test_acc > best_acc:

best_acc = test_acc

torch.save({"model": model.state_dict(), "ema": ema.shadow, "acc": best_acc, "cfg": cfg.__dict__},

"best_cifar10_wrn.pt")

print(

f"Epoch {epoch:03d}/{cfg.epochs} | "

f"loss {train_loss:.4f} | train_acc {train_acc*100:.2f}% | "

f"test_acc(EMA) {test_acc*100:.2f}% | best {best_acc*100:.2f}% | "

f"time {dt:.1f}s"

)

print(f"\nDone. Best test accuracy (EMA): {best_acc*100:.2f}%")

print("Saved checkpoint: best_cifar10_wrn.pt")

if __name__ == "__main__":

cfg = Config(

seed=42,

epochs=200,

batch_size=128,

num_workers=4,

lr=0.2,

warmup_epochs=5,

label_smoothing=0.1,

mix_alpha=1.0,

cutmix_prob=0.5,

ema_decay=0.9999,

drop_rate=0.0,

)

train(cfg)

ChatGPTの方は最後まで実行することができ、テストデータでのAccuracyは84.74%という結果になりました。

また、回答速度については、以下の結果になりました。

- Groq(openai/gpt-oss-120b):約16秒

- ChatGPT(GPT-5.2):約73秒

日本語のビジネスメール作成

次に、「取引先との商談の場を設けてもらうための、相手方に送るアポイントのメール」を、日本語で出力させてみます。そのために、以下のプロンプトを用いました。

Please write an appointment e-mail to ask your business partner to set up a business meeting. Please output your response in Japanese.クリック(タップ)で表示

件名: 〇〇(貴社名)様との面談日程調整のお願い

-

〇〇株式会社

営業部 〇〇様

平素より大変お世話になっております。

株式会社△△、営業部の□□と申します。

早速ですが、貴社との今後の協業に関しまして、以下の内容で打ち合わせの機会をいただきたくご連絡差し上げました。

目的: 新製品の共同販売プランおよび販売促進施策の検討

場所: 貴社オフィス(またはオンライン会議)

所要時間: 約1時間程度

つきましては、下記候補日をご確認いただき、ご都合の良い日時をご教示いただけますでしょうか。

(ご都合が合わない場合は、別途ご希望

候補日

時間帯

5月10日(火)

10:00~12:00、14:00~16:00

5月11日(水)

9:00~11:00、13:00~15:00

5月12日(木)

10:00~12:00、14:00~16:00

ご多忙のところ恐縮ですが、5月中にお時間をいただけますと幸いです。

ご確認のほど、何卒よろしくお願い申し上げます。

-

敬具

株式会社△△

営業部 □□

電話:03-1234-5678

メール:hanako.suzuki@example.com

-

※オンラインでの開催をご希望の場合は、Zoom(またはMicrosoft Teams)のリンクをご送付いたします。ご遠慮なくお申し付けください。

ChatGPT(GPT-5.2)の出力結果は、以下の通りです。

クリック(タップ)で表示

株式会社〇〇

〇〇様

いつもお世話になっております。

株式会社△△の□□です。

この度、今後のお取り組みにつきまして、一度お打ち合わせのお時間を頂戴できればと存じ、ご連絡いたしました。

ご都合のよろしい日時をいくつかご教示いただけますでしょうか。

当方といたしましては、下記日程ですと比較的調整がしやすくなっております。

・〇月〇日(〇)〇:〇〇〜

・〇月〇日(〇)〇:〇〇〜

・〇月〇日(〇)〇:〇〇〜

上記以外でも、〇〇様のご都合に合わせて調整いたしますので、お気軽にお知らせください。

お忙しいところ恐れ入りますが、何卒よろしくお願い申し上げます。

――――――――――

□□

株式会社△△

TEL:00-0000-0000

MAIL:xxxx@xxxx.co.jp

――――――――――

必要なら、

・かなりフォーマル寄り

・カジュアル寄り

・候補日を出さず「先方提案型」

・営業寄り(提案前提)

みたいにチューニングもできるので、用途を教えてください。

また、回答速度については、以下の結果になりました。

- Groq(openai/gpt-oss-120b):約6秒

- ChatGPT(GPT-5.2):約14秒秒

2000文字以上のブログ作成

最後に、「2000以上のプログラミングに関するブログ記事」を出力させてみます。そのために、以下のプロンプトを用いました。

Please write a blog post that provides an overview of programming in at least 2000 words.

Groq(openai/gpt-oss-120b)の出力結果は、以下の通りです(24891文字)。

クリック(タップ)で表示

Programming 101: A Comprehensive Overview

-

TL;DR – Programming is the art and science of giving computers precise instructions to solve problems. It spans a rich history, many paradigms, dozens of languages, and an ecosystem of tools, practices, and communities that make modern software development possible. This post walks you through the fundamentals, the major ways of thinking about code, the most common languages and tooling, best‑practice habits, and where the field is heading—all in a format that’s useful whether you’re a complete beginner or a seasoned developer looking for a refresher.

-

Table of Contents

What Is Programming?

A Brief History of Programming

Core Concepts Every Programmer Must Know

Programming Paradigms – How We Structure Code

A Survey of Popular Languages

The Modern Development Workflow

Tooling: IDEs, Build Systems, Version Control, and More

Best Practices & Coding Standards

Learning Path & Resources

Emerging Trends & The Future of Programming

Conclusion

-

1. What Is Programming?

At its simplest, programming is the process of creating a set of instructions that a computer can execute to accomplish a task. Those instructions—written in a programming language—are transformed, either directly or through a series of translation steps, into machine code (binary) that the hardware can understand.

Why Do We Program?

Automation – Repeating tedious manual steps (e.g., data entry, file conversion).

Problem solving – Implementing algorithms that compute results faster or more accurately than humans can.

Creativity – Building games, art installations, music, and interactive experiences.

Business enablement – Powering everything from e‑commerce sites to enterprise resource planning (ERP) systems.

From Idea to Execution

Problem definition – What exactly do you want to achieve?

Algorithm design – Break the problem into logical steps.

Implementation – Translate the algorithm into code using a language’s syntax and semantics.

Testing & debugging – Verify the code works and fix any defects.

Deployment – Make the program available to users or other systems.

Programming is therefore an interdisciplinary activity that blends logic, mathematics, communication, and domain‑specific knowledge.

-

2. A Brief History of Programming

Era

Milestones

Key Languages / Concepts

1940s–1950s

First electronic computers (ENIAC, UNIVAC). Programming done with plugboards and machine code.

Assembly, Machine code

1950s

Birth of high‑level languages to improve productivity.

FORTRAN (scientific computing), COBOL (business data processing)

1960s

Structured programming, early compilers, and operating systems.

ALGOL, LISP (functional), BASIC (education)

1970s

System programming, the C language, Unix OS, and the rise of procedural style.

C, Pascal, Smalltalk (first OO language)

1980s

Object‑oriented programming (OOP) mainstream; personal computers explode.

C++, Objective‑C, Perl, Ada

1990s

Internet boom; scripting languages for web and system admin.

Java, JavaScript, PHP, Python, Ruby

2000s

Open‑source movement, Agile methodology, mobile platforms.

C#, Scala, Go, Swift

2010s‑present

Cloud-native, containers, data‑science, AI, and “low‑code/no‑code”.

Rust, Kotlin, TypeScript, Julia, Dart

The evolution reflects a constant tension between expressiveness (how easily we can write what we want) and performance (how fast the computer can run it). Modern languages strive to give you both, often by compiling to efficient bytecode or machine code while offering expressive syntax and powerful abstractions.

-

3. Core Concepts Every Programmer Must Know

Even before you pick a language, there are universal ideas that appear in virtually every programming environment.

3.1. Data Types & Structures

Concept

Description

Typical Examples

Primitive types

Built‑in, indivisible values.

int, float, bool, char

Composite types

Collections of primitives or other composites.

Arrays, lists, tuples

Associative containers

Key‑value mappings.

Dictionaries/Maps, hash tables

Abstract data types (ADTs)

Logical description independent of implementation.

Stack, queue, set, graph

User‑defined types

Custom structures that combine fields and behavior.

struct, class, record

Understanding how data is stored, accessed, and mutated is essential for writing correct, efficient code.

3.2. Control Flow

Conditional statements – if/else, switch/case.

Loops – for, while, do‑while, and functional equivalents (map, filter).

Early exit – break, continue, return.

Control flow determines the order in which statements are executed, which is the backbone of algorithmic logic.

3.3. Functions / Procedures

Pure functions – No side effects, deterministic output.

Impure functions – May modify state, perform I/O.

Higher‑order functions – Accept functions as arguments or return them.

Recursion – A function calling itself (useful for divide‑and‑conquer problems).

Functions help encapsulate logic, promote reuse, and improve readability.

3.4. Variables, Scope, and Lifetime

Variable declaration – Reserving storage.

Scope – Visibility region (global, module, block, lexical).

Lifetime – Duration of existence (static, automatic, dynamic).

Proper scoping prevents naming collisions and reduces bugs caused by unintended sharing of mutable state.

3.5. Memory Management

Manual – Explicit malloc/free (C) or new/delete (C++).

Automatic (GC) – Garbage collectors reclaim unused memory (Java, C#, Python).

Ownership & borrowing – Rust’s compile‑time model guaranteeing safety without a GC.

Memory bugs (leaks, dangling pointers, buffer overflows) are among the most severe and hard‑to‑diagnose errors, so knowing how your language handles memory is crucial.

3.6. Error Handling

Return codes – Traditional C style.

Exceptions – Throw/catch mechanisms (Java, Python, C#).

Result/Either types – Explicit success/failure values (Rust, Haskell, Swift).

Choosing the right strategy makes your code more robust and easier to reason about.

3.7. Concurrency & Parallelism

Multithreading – Multiple threads share memory space.

Multiprocessing – Separate processes (often via OS fork or containers).

Async/Await – Non‑blocking I/O and cooperative multitasking.

Message passing – Actors, channels, or queues (Erlang, Go).

Modern applications must handle many simultaneous operations—understanding the model your language provides helps you write safe, performant code.

-

4. Programming Paradigms – How We Structure Code

A paradigm is a style or philosophy of writing programs. Most languages support multiple paradigms, but each language tends to emphasize one or two.

4.1. Imperative (Procedural)

Idea – Describe how to achieve a result via statements that change program state.

Features – Variables, assignment, loops, explicit order of execution.

Typical languages – C, Pascal, BASIC.

Example (C)

int sum = 0;

for (int i = 1; i <= 100; ++i) {

sum += i;

}

printf("%d\n", sum);

4.2. Object‑Oriented Programming (OOP)

Idea – Model the problem domain as objects that combine data (state) and behavior (methods).

Key principles – Encapsulation, inheritance, polymorphism, abstraction.

Typical languages – Java, C++, C#, Python (supports OOP), Ruby.

Example (Java)

class Counter {

private int value = 0;

public void increment() { value++; }

public int get() { return value; }

}

4.3. Functional Programming (FP)

Idea – Treat computation as the evaluation of pure functions and avoid mutable state.

Key concepts – First‑class functions, immutability, higher‑order functions, recursion, lazy evaluation.

Typical languages – Haskell, Lisp, Erlang, Clojure, Scala, F#, modern JavaScript (with functional style).

Example (Haskell)

sumTo :: Integer -> Integer

sumTo n = sum [1..n] -- List comprehension + built‑in sum (pure)

4.4. Declarative & Logic Programming

Declarative – What the result should look like, not how to compute it.

Logic – Use facts and rules; the engine searches for solutions.

Typical languages – Prolog (logic), SQL (set‑based declarative queries), HTML/CSS (UI layout).

Example (Prolog)

ancestor(X, Y) :- parent(X, Z), ancestor(Z, Y).

ancestor(X, Y) :- parent(X, Y).

4.5. Event‑Driven Programming

Idea – Flow is determined by events (user actions, messages, sensor input).

Common in – GUI toolkits, web browsers, Node.js.

Example (JavaScript)

button.addEventListener('click', () => {

console.log('Button clicked!');

});

4.6. Reactive & Data‑Flow Programming

Idea – Model data as streams that propagate changes automatically.

Frameworks – RxJS, React (with state/props), Elm, Svelte, Flutter’s Stream.

Example (RxJS)

import { fromEvent } from 'rxjs';

const clicks = fromEvent(document, 'click');

clicks.subscribe(e => console.log('Clicked at', e.clientX, e.clientY));

4.7. Choosing a Paradigm

Problem domain – E.g., UI-heavy apps benefit from event‑driven/reactive; high‑performance systems often use imperative + OOP.

Team skill set – Align with what developers already know, or plan for a learning curve.

Ecosystem – Library and framework availability can tilt the decision.

Most production codebases blend paradigms: a Java backend may be object‑oriented but use functional streams (java.util.stream); a Python script can be procedural yet leverage list comprehensions (a functional feature).

-

5. A Survey of Popular Languages

Below is a non‑exhaustive but practical snapshot of languages you’ll encounter in the industry, grouped by their typical use‑cases and characteristics.

Category

Language

Primary Use‑Cases

Compilation Model

Notable Features

Systems / Low‑level

C

OS kernels, embedded, performance‑critical libraries

Native compilation (GCC, Clang)

Minimal runtime, direct memory access

C++

Game engines, high‑frequency trading, scientific simulators

Native (often with templates)

OOP + generic programming, RAII, constexpr

Rust

Safe systems programming, WebAssembly, CLI tools

Native (LLVM)

Ownership model, zero‑cost abstractions, strong memory safety

Enterprise / General‑purpose

Java

Large‑scale web services, Android (legacy), fintech

Bytecode → JVM (Just‑In‑Time)

Strong typing, massive ecosystem, portability

C#

Windows apps, Unity games, backend with .NET Core

IL → CLR (JIT)

LINQ, async/await, rich tooling in Visual Studio

Kotlin

Android, server‑side (Spring), multiplatform

JVM bytecode or native (via Kotlin/Native)

Concise syntax, null‑safety, coroutines

Web & Scripting

JavaScript/TypeScript

Front‑end UI, Node.js back‑ends, serverless

Interpreted/JIT (V8, SpiderMonkey)

Event‑driven, async/await, massive library ecosystem

Python

Data science, automation, prototyping, web (Django/Flask)

Interpreted (CPython) or compiled (Cython)

Batteries‑included stdlib, readable syntax

Ruby

Rapid web development (Rails), scripting

Interpreted (MRI)

Convention over configuration, dynamic

Functional / Academic

Haskell

Compilers, research, high‑assurance systems

Native (GHC)

Pure FP, lazy evaluation, strong type inference

Scala

Big data (Spark), JVM backend

JVM bytecode

Combines OOP + FP, powerful type system

Elixir

Scalable web services, real‑time messaging

BEAM VM (Erlang)

Fault‑tolerant, immutable data, actor model

Data Science & Numerical

R

Statistics, data analysis, visualization

Interpreted (R interpreter)

Rich statistical packages

Julia

High‑performance scientific computing

JIT (LLVM)

Multiple dispatch, easy syntax, speeds close to C

Mobile

Swift

iOS/macOS native development

Native (LLVM)

Safety (optionals), modern syntax

Dart (with Flutter)

Cross‑platform mobile & desktop UI

Ahead‑of‑time (AOT) & JIT for dev

Hot‑reload, expressive UI widgets

Systems / Scripting

Go

Cloud services, micro‑services, DevOps tools

Native (gc)

Simplicity, built‑in concurrency (goroutines)

Shell (Bash, PowerShell)

System automation, pipelines

Interpreted

Direct OS interaction, text processing

Picking the “Right” Language

Project requirements – latency constraints, platform, existing codebase.

Team expertise – learning curve vs. productivity.

Ecosystem maturity – libraries, community support, tooling.

Long‑term maintainability – readability, language stability, backward compatibility.

Most professional developers become polyglots: they master a core language for the majority of their work and learn others as needed.

-

6. The Modern Development Workflow

A well‑structured workflow reduces bugs, accelerates delivery, and makes collaboration smoother. Below is a typical pipeline for a web‑oriented product, but the concepts apply across domains.

6.1. Ideation & Specification

User stories (Agile) or requirements documents (Waterfall).

Mockups / wireframes for UI‑heavy projects (Figma, Sketch).

Technical design – architecture diagrams, data model sketches.

6.2. Setting Up the Project

Step

Description

Tools

Repository

Create a Git repo (GitHub, GitLab, Bitbucket).

git init, gh repo create

Branching model

Decide on Git Flow, GitHub Flow, trunk‑based.

git checkout -b feature/xyz

Dependency management

Declare external libraries and lock versions.

npm, pipenv, cargo, Maven

Build configuration

Automate compilation, packaging, linting.

Makefile, Gradle, Webpack, Bazel

Continuous Integration (CI)

Run tests & static analysis on each commit.

GitHub Actions, GitLab CI, Jenkins, CircleCI

6.3. Writing Code

Follow a coding style (indentation, naming).

Write small, focused functions – single responsibility principle (SRP).

Commit early, commit often – each commit should be a logical unit.

Document public APIs – use docstrings or external tools (Javadoc, Sphinx).

6.4. Testing

Type

Goal

Common Frameworks

Unit tests

Verify individual functions/classes in isolation.

JUnit, pytest, GoogleTest

Integration tests

Validate interaction between components.

Testcontainers, Cypress (E2E), Postman

Property‑based tests

Check that invariants hold over many inputs.

QuickCheck (Haskell), Hypothesis (Python)

Performance tests

Measure latency, throughput, memory.

JMH (Java), Gatling, k6

Static analysis

Detect bugs without executing code.

SonarQube, ESLint, MyPy, clang‑tidy

Test‑driven development (TDD)—write a failing test, then the minimal code to pass it—is a proven practice that yields robust code bases.

6.5. Review & Merge

Pull request (PR) – a proposal to merge changes.

Code review – peers examine logic, style, edge cases.

Automated checks – CI runs tests, linting, security scans.

Merging only after the PR passes review and CI ensures a clean main branch.

6.6. Deployment

Stage

Description

Typical Tools

Staging

Deploy to an environment mirroring production for final verification.

Docker Compose, Kubernetes (dev namespace)

Canary / Blue‑Green

Gradual rollout to a fraction of users to catch regressions.

Flagger, Istio, AWS CodeDeploy

Production

Full release to end users.

Helm charts, Terraform, CI/CD pipelines

Observability

Monitoring, logging, tracing to detect issues.

Prometheus + Grafana, ELK stack, Jaeger, Datadog

Feedback loops—especially from monitoring—inform the next iteration of development.

-

7. Tooling: IDEs, Build Systems, Version Control, and More

7.1. Integrated Development Environments (IDEs)

IDE

Languages

Strengths

Visual Studio Code

Almost any (via extensions)

Lightweight, rich ecosystem, built‑in Git

IntelliJ IDEA

Java, Kotlin, Groovy, Scala

Deep refactoring, intelligent code completion

Visual Studio

C#, C++, .NET

Debugger, profiler, Windows‑centric tooling

Eclipse

Java, C/C++

Mature, plugin‑rich, open source

PyCharm

Python

Scientific notebooks, virtualenv integration

Xcode

Swift, Objective‑C

iOS/macOS UI designers, Instruments profiling

IDEs provide syntax highlighting, code completion, debuggers, and often refactoring tools that dramatically speed up development.

7.2. Build & Package Management

Build System

Language(s)

What It Does

Make

C/C++, many

Simple rule‑based builds, widely available

CMake

C/C++, Fortran

Cross‑platform generation of native build files

Maven/Gradle

Java, Kotlin

Dependency resolution, lifecycle management

npm/Yarn/pnpm

JavaScript/TypeScript

Package registry, script runner

Cargo

Rust

Builds, tests, documentation, crates.io integration

pip / Poetry

Python

Dependency lock files, virtualenv handling

Bazel

Multi‑language (C++, Java, Go, etc.)

Scalable, reproducible builds for large monorepos

Docker

All (containerized)

Build images for consistent runtime environment

A good build system handles incremental compilation, dependency graphs, and artifact publishing.

7.3. Version Control Systems (VCS)

Git – De‑facto standard; distributed, branching cheap, huge ecosystem.

Mercurial – Similar to Git but with a simpler UI; used by some legacy projects.

Subversion (SVN) – Centralized model; still used in some enterprise contexts.

Key concepts: commits, branches, tags, rebasing, merge conflicts, hooks.

7.4. Debugging & Profiling

Tool

Platform

Typical Use

GDB

Linux/macOS

Low‑level debugging (C/C++)

LLDB

macOS/iOS

Modern alternative to GDB

Visual Studio Debugger

Windows

Full‑featured UI debugger

Chrome DevTools

Web

JavaScript debugging, performance timeline

PyCharm Debugger

Python

Step‑through, variable inspection

Perf / Flamegraph

Linux

CPU profiling

Valgrind

Linux

Memory leak detection

Wireshark

Network

Packet capture and analysis (useful for networked apps)

Profilers let you locate hot spots and memory bottlenecks, enabling performance‑guided optimization.

7.5. Collaboration & Documentation

Confluence / Notion – Knowledge base and design docs.

Swagger / OpenAPI – API contract documentation + interactive testing.

Docusaurus / MkDocs – Static site generators for developer docs.

Slack / Discord / Teams – Real‑time communication.

Good documentation reduces onboarding time and minimizes “tribal knowledge” loss.

-

8. Best Practices & Coding Standards

Below is a distilled checklist that applies across most languages and project types.

8.1. Write Self‑Documenting Code

Meaningful names – totalRevenue, isActive, fetchUserById.

Limit function length – Aim for ≤ 30 lines (or one screen).

Prefer composition over inheritance – Reduces tight coupling.

8.2. Keep Things DRY (Don’t Repeat Yourself)

Extract common logic into reusable functions or modules.

Use language features like generics, templates, or macros when appropriate.

8.3. Follow the SOLID Principles (for OOP)

Principle

Meaning

Single Responsibility

A class should have one reason to change.

Open/Closed

Entities should be open for extension, closed for modification.

Liskov Substitution

Subtypes must be substitutable for their base types.

Interface Segregation

Prefer many specific interfaces over one “fat” one.

Dependency Inversion

Depend on abstractions, not concrete implementations.

8.4. Defensive Programming

Validate inputs early (assert, require).

Use optionals / Result types rather than null when the language supports them.

Sanitize data before using it in security‑critical contexts (SQL, file paths).

8.5. Consistent Formatting

Adopt a formatter (e.g., prettier, black, clang-format).

Enforce via CI (run formatter in a pre‑commit hook).

8.6. Write Automated Tests Early

Coverage target – 70‑80% is a common baseline; aim for high coverage of critical paths.

Fast feedback – Unit tests should run in seconds; keep them in the CI pipeline.

8.7. Secure Coding Practices

Threat

Mitigation

SQL injection

Parameterized queries / ORM

Cross‑site scripting (XSS)

Encode output, CSP

Buffer overflow

Use safe languages or bounds‑checked APIs

Insecure deserialization

Validate schemas, avoid pickle/eval on untrusted data

Hard‑coded secrets

Store in secret managers (Vault, AWS Secrets Manager)

8.8. Performance Awareness

Avoid premature optimization – First make it work, then make it fast.

Profile before optimizing – Use a profiler to find real bottlenecks.

Understand algorithmic complexity – Big‑O notation guides design choices.

8.9. Documentation & Knowledge Sharing

Keep README concise: purpose, quick start, how to test, how to contribute.

Use inline comments sparingly—explain “why”, not “what”.

Maintain CHANGELOG for versioned releases.

8.10. Continuous Learning

Follow language release notes.

Participate in code reviews; they’re a two‑way street.

Contribute to open‑source or internal tooling to deepen expertise.

-

9. Learning Path & Resources

Below is a staged roadmap for someone starting from zero, with concrete resources at each step.

Stage

Goal

Suggested Resources

1. Foundations

Understand what a computer is, how code becomes executable, basic programming concepts (variables, loops).

“Computer Science 101” (Coursera), “Automate the Boring Stuff with Python” (online book), Khan Academy (Intro to programming)

2. First Language (Python)

Write simple scripts, work with data structures, practice debugging.

Python.org tutorials, “Python Crash Course” (Eric Matthes), exercism.io (Python track)

3. Version Control

Git basics: commit, branch, merge, pull request.

Git Handbook (GitHub), “Pro Git” (free book)

4. Algorithms & Data Structures

Learn sorting, searching, recursion, linked lists, trees, graphs.

“Algorithms” by Sedgewick & Wayne (online), LeetCode (Easy/Medium)

5. Second Language (JavaScript/TypeScript)

Build interactive web pages, understand event loop, asynchronous code.

MDN Web Docs, “Eloquent JavaScript” (online), freeCodeCamp

6. Testing & CI

Write unit tests, set up a CI pipeline.

Test‑Driven Development by Example (Beck), GitHub Actions tutorials

7. OOP & Design Patterns

Apply SOLID principles, learn common patterns (Factory, Singleton, Observer).

“Design Patterns: Elements of Reusable Object‑Oriented Software” (Gang of Four), Refactoring.guru

8. Functional Programming Basics

Pure functions, immutability, higher‑order functions.

“Learn You a Haskell for Great Good!” (online), “Functional Programming in Scala”

9. Systems Programming (optional)

Understand memory layout, pointers, concurrency primitives.

“The Rust Programming Language” (online), “Operating Systems: Three Easy Pieces”

10. Real‑World Project

Contribute to open‑source, build a personal portfolio app, or join a hackathon.

GitHub “good first issue” tags, Hacktoberfest, personal GitHub pages

11. Advanced Topics

Cloud-native (Kubernetes), micro‑services, DevOps, AI/ML pipelines.

“Kubernetes Up & Running”, Coursera “Machine Learning” (Andrew Ng), Pluralsight courses

Learning Tips

Practice daily – 30‑60 minutes of coding beats occasional marathon sessions.

Explain what you learn – Write blog posts or teach a friend; teaching solidifies knowledge.

Read code – Open‑source repos give exposure to real‑world patterns.

Iterate – Refactor your own code as you acquire new concepts; that’s the most authentic “real‑world” experience.

-

10. Emerging Trends & The Future of Programming

Trend

What It Means for Developers

Languages / Tools Gaining Traction

AI‑assisted coding

Large language models (LLMs) can autocomplete whole functions, generate tests, or suggest refactors.

GitHub Copilot, Tabnine, OpenAI Codex

Low‑code / No‑code platforms

Business users build apps visually; developers focus on custom extensions.

Mendix, OutSystems, Retool

WebAssembly (Wasm)

Run near‑native code in browsers and serverless environments; expands language choices for the web.

Rust, AssemblyScript, C/C++ compiled to Wasm

Quantum programming

Algorithms expressed for quantum processors, still experimental.

Q# (Microsoft), Qiskit (Python), Cirq (Google)

Rust’s rise

Safety guarantees without GC attract systems, embedded, and even web developers.

Rust’s ecosystem (Tokio, Actix, Wasm)

Observability‑first development

Tracing, metrics, and logging are baked into code from day one.

OpenTelemetry, Prometheus, Grafana

Serverless & Function‑as‑a‑Service (FaaS)

Deploy single functions without managing servers; encourages micro‑granular design.

AWS Lambda, Azure Functions, Cloudflare Workers

Edge computing

Code runs closer to the user (CDN edge), reducing latency.

Cloudflare Workers, Fastly Compute@Edge

Data‑centric programming

Declarative pipelines for massive data streams become mainstream.

Apache Beam, Flink, Snowflake Snowpark

Implications

Continuous learning will become a career‑level skill, not a one‑off event.

Collaboration with AI: developers will need to audit, verify, and adapt AI‑generated code.

Security hygiene grows more critical as the attack surface expands (e.g., supply‑chain attacks on packages).

-

11. Conclusion

Programming is far more than typing code into a text editor; it’s a disciplined approach to turning ideas into reliable, maintainable, and scalable software. By mastering the core concepts—data structures, control flow, functions, memory management, and error handling—you gain a solid foundation that transcends any single language.

Understanding paradigms equips you to choose the most expressive model for a given problem, while familiarity with the ecosystem of tools (IDEs, version control, CI/CD, testing frameworks) makes the development process efficient and collaborative.

Best practices—clear naming, automated testing, code reviews, and security‑first thinking—protect you from the inevitable complexity that large codebases bring. And because the field evolves at breakneck speed, a commitment to learning (through projects, community participation, and staying abreast of emerging trends) is essential for a thriving career.

Whether you’re building a tiny script to automate a daily task, a massive micro‑service platform serving millions of requests, or a cutting‑edge quantum algorithm, the principles outlined in this post will serve as a compass. Keep experimenting, keep reading, and keep coding—software is the language of the modern world, and you now have a roadmap to become fluent.

-

Happy coding! 🚀ChatGPT(GPT-5)の出力結果は、以下の通りです。

クリック(タップ)で表示

Programming: A Comprehensive Overview of the Digital Craft

Introduction

Programming is the backbone of modern technology. From the apps we use daily to the infrastructure powering global finance, transportation, healthcare, and entertainment, programming enables machines to perform complex tasks efficiently and reliably. At its core, programming is the process of designing, writing, testing, and maintaining instructions that computers follow to accomplish specific goals.

While programming once belonged mainly to mathematicians and academic researchers, today it is a widespread skill across industries. Businesses rely on software for operations, marketing, analytics, and customer engagement. Individuals use programming for automation, data analysis, creative projects, and entrepreneurship. As technology continues to shape society, understanding programming has become increasingly valuable.

This article provides a broad, foundational overview of programming, covering what programming is, how it works, major programming paradigms, popular languages, development workflows, tools, career paths, and future trends.

-

What Is Programming?

Programming is the act of communicating instructions to a computer using a formal language. Computers cannot understand human language directly. Instead, they require structured instructions written in programming languages, which are later translated into machine code that hardware can execute.

A program typically consists of:

Inputs: Data or signals provided to the system

Processing Logic: Rules or algorithms applied to the data

Outputs: Results generated after processing

For example, a simple calculator program takes numbers as input, applies mathematical logic, and outputs results.

Programming exists because computers are extremely fast but fundamentally literal. They execute instructions exactly as written. Even small mistakes in logic or syntax can produce unexpected results, which is why precision is essential in programming.

-

How Computers Execute Programs

Understanding programming requires basic knowledge of how computers execute instructions.

Machine Code

At the lowest level, computers operate using binary machine code (0s and 1s). Writing software directly in machine code is extremely difficult, so higher-level languages were created.

Compiled Languages

Compiled languages convert code into machine instructions before execution. Examples include:

C

C++

Rust

Go

Advantages:

High performance

Low-level system access

Disadvantages:

Longer build process

Platform-specific binaries

Interpreted Languages

Interpreted languages run through an interpreter at runtime. Examples include:

Python

JavaScript

Ruby

Advantages:

Faster development

Cross-platform flexibility

Disadvantages:

Slower runtime performance compared to compiled languages

Hybrid Approaches

Some languages, such as Java and C#, compile to intermediate bytecode and run inside virtual machines, balancing portability and performance.

-

Core Programming Concepts

Regardless of language, most programming relies on common foundational concepts.

Variables

Variables store data values. They act like labeled containers.

Example:

age = 25

Data Types

Common data types include:

Integers (whole numbers)

Floating point numbers (decimals)

Strings (text)

Booleans (true/false)

Arrays or lists (collections of values)

Objects (structured data)

Control Flow

Control flow determines how programs make decisions and repeat actions.

Includes:

If/else conditions

Loops (for, while)

Switch statements

Functions

Functions group reusable logic.

Benefits:

Cleaner code organization

Easier debugging

Reusability

Algorithms

An algorithm is a step-by-step procedure for solving a problem. Efficient algorithms improve performance and scalability.

-

Programming Paradigms

Programming paradigms are styles or approaches to writing code.

Procedural Programming

Focuses on step-by-step instructions.

Example languages:

C

Pascal

Used in system-level programming and performance-critical applications.

-

Object-Oriented Programming (OOP)

Organizes code into objects that combine data and behavior.

Key principles:

Encapsulation

Inheritance

Polymorphism

Abstraction

Popular OOP languages:

Java

C++

C#

Python (multi-paradigm)

OOP is widely used in enterprise applications and large systems.

-

Functional Programming

Emphasizes pure functions and immutable data.

Characteristics:

No shared state

Predictable outputs

Easier parallel processing

Languages:

Haskell

Elixir

Scala

Functional features in JavaScript and Python

-

Declarative Programming

Focuses on what result is desired, not how to compute it.

Examples:

SQL for databases

HTML for document structure

-

Popular Programming Languages

Python

Strengths:

Easy to learn

Large ecosystem

Strong in AI, data science, automation

Common uses:

Machine learning

Data analysis

Web development

Scripting

-

JavaScript

Strengths:

Runs in browsers

Full-stack capability with Node.js

Massive ecosystem

Common uses:

Web front-end

Web back-end

Mobile apps

Desktop apps

-

Java

Strengths:

Stability

Enterprise adoption

Cross-platform via JVM

Common uses:

Enterprise systems

Android apps

Large backend systems

-

C and C++

Strengths:

Performance

Hardware-level access

Common uses:

Operating systems

Game engines

Embedded systems

-

Go

Strengths:

Simplicity

Concurrency support

Cloud-native ecosystem

Common uses:

Backend services

DevOps tools

Cloud infrastructure

-

Rust

Strengths:

Memory safety

Performance

Growing ecosystem

Common uses:

Systems programming

WebAssembly

Security-critical software

-

Software Development Lifecycle

Professional programming follows structured workflows.

Planning

Define:

Requirements

Features

Constraints

Timeline

Design

Includes:

System architecture

Database schema

API design

Development

Writing code according to design.

Testing

Types include:

Unit testing

Integration testing

End-to-end testing

Deployment

Releasing software to production environments.

Maintenance

Fixing bugs, updating features, improving performance.

-

Development Tools

Code Editors and IDEs

Popular tools:

VS Code

IntelliJ

PyCharm

Version Control

Git allows developers to track changes and collaborate.

Platforms:

GitHub

GitLab

Bitbucket

Package Managers

Help manage dependencies:

npm (JavaScript)

pip (Python)

cargo (Rust)

-

Programming in Different Domains

Web Development

Includes:

Frontend (UI/UX)

Backend (servers, databases)

APIs

-

Data Science

Focuses on:

Data analysis

Visualization

Machine learning

-

Mobile Development

Platforms:

iOS

Android

Cross-platform frameworks

-

Game Development

Requires:

Graphics programming

Physics engines

Real-time performance optimization

-

Embedded Systems

Programming hardware devices like IoT devices and microcontrollers.

-

Challenges in Programming

Debugging

Finding and fixing errors requires analytical thinking.

Performance Optimization

Balancing speed, memory usage, and scalability.

Security

Preventing vulnerabilities like:

SQL injection

Cross-site scripting

Memory exploits

-

Learning Programming

Effective learning methods include:

Building Projects

Hands-on experience accelerates learning.

Reading Code

Studying open-source projects builds understanding.

Practice Platforms

Coding challenges improve problem-solving skills.

-

Programming Careers

Common roles:

Software Engineer

Data Scientist

DevOps Engineer

AI Engineer

Web Developer

Mobile Developer

Programming skills are increasingly useful even in non-technical roles.

-

The Future of Programming

Several trends are shaping the future.

AI-Assisted Development

AI tools help generate code, detect bugs, and optimize performance.

Low-Code and No-Code Platforms

Allow non-programmers to build applications.

Cloud-Native Development

Microservices and serverless architectures dominate modern infrastructure.

Quantum Computing (Early Stage)

Could redefine algorithms and cryptography.

-

Why Programming Matters

Programming is more than technical skill. It teaches:

Logical thinking

Problem decomposition

System design

Precision communication

These skills apply across industries.

-

Conclusion

Programming is a foundational skill in the digital era. It enables innovation across every sector, from healthcare and education to finance and entertainment. While the landscape of programming languages and tools continues to evolve, the core principles remain stable: logical thinking, structured problem solving, and precise communication with machines.

Whether someone pursues programming professionally or uses it to support another career, understanding programming unlocks powerful capabilities. As automation and software continue to reshape the world, programming literacy will become as fundamental as traditional literacy in many fields.

The best way to understand programming is to start building. Even small projects can provide deep insights into how software works. Over time, programming evolves from writing instructions into designing systems, solving real-world problems, and creating tools that can impact millions of people.

-

If you want, I can also tailor this into:

An SEO blog version (structured headings, keyword targets, meta, FAQ)

A beginner-friendly version (less theory, more examples)

A technical deep-dive (architecture, compilers, runtime internals)

A business-focused version (ROI, automation, digital transformation)

Just tell me which direction you want to take.

また、回答速度については、以下の結果になりました。

- Groq(openai/gpt-oss-120b):約22秒

- ChatGPT(GPT-5.2):約43秒

なお、オフラインで高速出力できるAIチャットボットについて詳しく知りたい方は、下記の記事を合わせてご確認ください。

Groqを使う際の注意点・デメリット

Groqを使用する際には、いくつかの制限事項があることを理解しておきましょう。日本語での出力に対応しているとされていますが、実際のテストでは英語で回答が返ってくることがあります。また、画像認識や複雑なAIプログラム作成などの高度な処理では、エラーが出る可能性があるでしょう。

Groqは回答を作るスピードが非常に速いですが、回答の正確さや質の面ではGPT-5.2の方が優れています。そのため、急いで回答が必要な場合はGroqを、より正確な回答が必要な場合はGPT-5.2を選ぶといった使い分けが大切です。

Groq よくある質問(FAQ)

Groqで高速なテキスト生成を実現しよう

Groqは、Web上で動作できるテキスト生成AIで、ChatGPTの有料版に比べて約10倍も速いです。

とはいえ、品質はChatGPTの方が良いという意見もあるので、きっちりと利用用途を考える必要があると思いました。例えば、以下のようなユースケースが考えられるかと思います。

- 速さや省エネを優先する場合:Groq

- 品質を優先する場合:GPT-5.2など

検証結果を見ても、すべてのタスクにおいてGroqは、GPT-5.2よりも早く完了していました。回答速度に関しては、圧倒していると言えるでしょう。しかし、精度面を見ると、やはりGPT-5.2の方が圧倒しているように思えます。

| タスク | チャットボット | 精度 | 回答速度 |

|---|---|---|---|

| 画像認識の深層学習モデルの構築 | Groq | エラーが発生 | 約16秒 |

| ChatGPT | 62%の精度のモデルが構築できた | 約73秒 | |

| 日本語のビジネスメール作成 | Groq | 自然な日本語で、ある程度使えるメール文を出力できた | 約6秒 |

| ChatGPT | 自然な日本語で、ある程度使えるメール文を出力できた | 約14秒 | |

| 2000文字程度のブログ作成 | Groq | ある程度質の高いブログができた | 約22秒 |

| ChatGPT | ある程度質の高いブログができた | 約43秒 |

ちなみに、あるXユーザーによると、使いどころが限られているが、「LLMで大量の処理をしたい」「GPUに空きがない」という場合に、役に立つかもしれないです。

最後に

いかがだったでしょうか?

圧倒的な生成速度を誇るGroqを活用し、業務効率を飛躍的に向上させませんか?最適なLLMの選定から具体的な導入方法まで、ビジネスでの活用戦略をご提案します。

株式会社WEELは、自社・業務特化の効果が出るAIプロダクト開発が強みです!

開発実績として、

・新規事業室での「リサーチ」「分析」「事業計画検討」を70%自動化するAIエージェント

・社内お問い合わせの1次回答を自動化するRAG型のチャットボット

・過去事例や最新情報を加味して、10秒で記事のたたき台を作成できるAIプロダクト

・お客様からのメール対応の工数を80%削減したAIメール

・サーバーやAI PCを活用したオンプレでの生成AI活用

・生徒の感情や学習状況を踏まえ、勉強をアシストするAIアシスタント

などの開発実績がございます。

生成AIを活用したプロダクト開発の支援内容は、以下のページでも詳しくご覧いただけます。 ︎株式会社WEELのサービスを詳しく見る。

︎株式会社WEELのサービスを詳しく見る。

まずは、「無料相談」にてご相談を承っておりますので、ご興味がある方はぜひご連絡ください。 ︎生成AIを使った業務効率化、生成AIツールの開発について相談をしてみる。

︎生成AIを使った業務効率化、生成AIツールの開発について相談をしてみる。

「生成AIを社内で活用したい」「生成AIの事業をやっていきたい」という方に向けて、生成AI社内セミナー・勉強会をさせていただいております。

セミナー内容や料金については、ご相談ください。

また、サービス紹介資料もご用意しておりますので、併せてご確認ください。

【監修者】田村 洋樹

株式会社WEELの代表取締役として、AI導入支援や生成AIを活用した業務改革を中心に、アドバイザリー・プロジェクトマネジメント・講演活動など多面的な立場で企業を支援している。

これまでに累計25社以上のAIアドバイザリーを担当し、企業向けセミナーや大学講義を通じて、のべ10,000人を超える受講者に対して実践的な知見を提供。上場企業や国立大学などでの登壇実績も多く、日本HP主催「HP Future Ready AI Conference 2024」や、インテル主催「Intel Connection Japan 2024」など、業界を代表するカンファレンスにも登壇している。